Table of Contents

Introduction

Over the next few posts, we will investigate decision boundaries. A decision boundary is a graphical representation of the solution to a classification problem. Decision boundaries can help us to understand what kind of solution might be appropriate for a problem. They can also help us to understand the how various machine learning classifiers arrive at a solution.

In this post, we will look at a problem’s optimal decision boundary, which we can find when we know exactly how our data was generated. The optimal decision boundary represents the “best” solution possible for that problem. Consequently, by looking at the complexity of this boundary and at how much error it produces, we can get an idea of the inherent difficulty of the problem.

Unless we have generated the data ourselves, we won’t usually be able to find the optimal boundary. Instead, we approximate it using a classifier. A good machine learning classifier tries to approximate the optimal boundary for a problem as closely as possible.

In future posts, we will look at the approximating boundary created by various classification algorithms. We will investigate the strategy the classifier uses to create this boundary and how this boundary evolves as the classifier is trained on more and more data. There are many classification algorithms available to a data scientist – regression, discriminant analysis, decision trees, neural networks, to name a few – and it is important to understand which algorithm is appropritate for the problem at hand. Decision boundaries can help us to do this.

Optimal Boundaries

A classification problem asks: given some observations of a thing, what is the best way to assign that thing to a class based on some of its features? For instance, we might want to predict whether a person will like a movie or not based on some data we have about them, the “features” of that person.

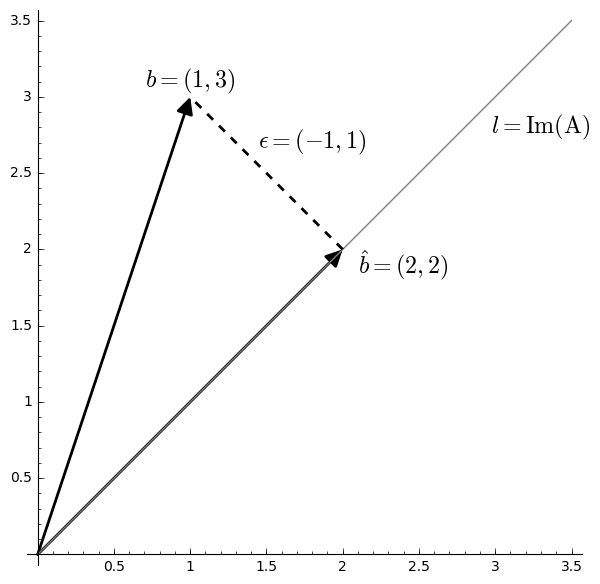

A solution to the classification problem is a rule that partitions the features and assigns each all the features of a partition to the same class. The “boundary” of this partitioning is the decision boundary of the rule.

It might be that two observations have exactly the same features, but are assigned to different classes. (Two things that look the same in the ways we’ve observed might differ in ways we haven’t observed.) In terms of probabilities this means both \[P(C = 0 \mid X) \gt 0\] and \[P(C = 1 \mid X) \gt 0\] In other words, we might not be able with full certainty to classify an observation. We could however assign the observation to its most probable class. This gives us the decision rule \[ \hat{C} = \operatorname*{argmax}_c P(C = c \mid X) \]

The boundary that this rule produces is the optimal decision boundary. It is the MAP estimate of the class label, and it is the rule that minimizes classification error under the zero-one loss function. We will look at error and loss more in a future post.

We will consider binary classification problems, meaning, there will only be two possible classes, 0 or 1. For a binary classification problem, the optimal boundary occurs at those points where each class is equally probable: \[ P(C = 0 \mid X) = P(C = 1 \mid X) \]

Prepare R

We will use R to do our analysis. We’ll have a chance to try out gganimate and patchwork, a couple of newer packages that Thomas Lin Pedersen has been working on; they are really nice.

Here we’ll define some functions to produce plots of our examples. All of these assume a classification problem where our response is binary, \(C \in \{0, 1\}\), and is predicted by two continuous features, \((X, Y)\).

Briefly, they are

gg_sample:: creates a layer for a sample of the features colored by class.gg_density:: creates a layer of contour plots for feature densities within each class.gg_optimal:: creates a layer showing an optimal decision boundary.gg_mix_label:: creates a layer labelling components in a mixture distribution.

library(magrittr)

library(tidyverse)

library(ggplot2)

library(gganimate)

library(patchwork)

theme_set(theme_linedraw() +

theme(plot.title = element_text(size = 20),

legend.position = "none",

axis.text.x = element_blank(),

axis.text.y = element_blank(),

axis.title.x = element_blank(),

axis.title.y = element_blank(),

aspect.ratio = 1))

#' Make a sample layer

#'

#' @param data data.frame: a sample with continuous features `x` and `y`

#' grouped by factor `class`

#' @param classes (optional) a vector of which levels of `class` to

#' plot; default is to plot data from all classes

gg_sample <- function(data, classes = NULL, size = 3, alpha = 0.5, ...) {

if (is.null(classes)) {

subdata <- data

} else {

subdata <- filter(data, class %in% classes)

}

list(geom_point(data = subdata,

aes(x, y,

color = factor(class),

shape = factor(class)),

size = size,

alpha = alpha,

...),

scale_colour_discrete(drop = TRUE,

limits = levels(factor(data$class))))

}

#' Make a density layer

#'

#' @param data data.frame: a data grid of features `x` and `y` with contours `z`

#' @param data character: the name of the contour column

gg_density <- function(data, z, size = 1, color = "black", alpha = 1, ...) {

z <- ensym(z)

geom_contour(data = data,

aes(x, y, z = !!z),

size = size,

color = color,

alpha = alpha,

...)

}

#' Make an optimal boundary layer

#'

#' @param data data.frame: a data grid of features `x` and `y` with a column with

#' the `optimal` boundary contours

#' @param breaks numeric: which contour levels of `optimal` to plot

gg_optimal <- function(data, breaks = c(0), ...) {

gg_density(data, z = optimal, breaks = breaks, ...)

}

#' Make a layer of component labels for a mixture distribution with two classes

#'

#' @param mus list(data.frame): the means for components of each class; every row

#' is a mean, each column is a coordinate

#' @param classes (optional) a vector of which levels of class to plot

gg_mix_label <- function(mus, classes = NULL, size = 10, ...) {

ns <- map_int(mus, nrow)

component <- do.call(c, map(ns, seq_len))

class <- do.call(c, map2(0:(length(ns) - 1), ns, rep.int))

mu_all <- do.call(rbind, mus)

data <- cbind(mu_all, component, class) %>%

set_colnames(c("x", "y", "component", "class")) %>%

as_tibble()

if (is.null(classes)) {

subdata <- data

} else {

subdata <- filter(data, class %in% classes)

}

list(shadowtext::geom_shadowtext(data = subdata,

mapping = aes(x, y,

label = component,

color = factor(class)),

size = size,

...),

scale_colour_discrete(drop = TRUE,

limits = levels(factor(data$class))))

}

Decision Boundaries for Continuous Features

Decision boundaries are most easily visualized whenever we have continuous features, most especially when we have two continuous features, because then the decision boundary will exist in a plane.

With two continuous features, the feature space will form a plane, and a decision boundary in this feature space is a set of one or more curves that divide the plane into distinct regions. Inside of a region, all observations will be assigned to the same class.

As mentioned above, whenever we know exactly how our data was generated, we can produce the optimal decision boundary. Though this won’t usually be possible in practice, investigating the optimal boundaries produced from simulated data can still help us to understand their properties.

We will look at the optimal boundary for a binary classification problem on a with features on a couple of common distributions: a multivariate normal distribution and a mixture of normal distributions.

Normally Distributed Features

In a binary classification problem, whenever the features for each class jointly have a multivariate normal distribution, the optimal decision boundary is relatively simple. We will start our investigation here.

With two features, the feature space is a plane. It can be shown that the optimal decision boundary in this case will either be a line or a conic section (that is, an ellipse, a parabola, or a hyperbola). With higher dimesional feature spaces, the decision boundary will form a hyperplane or a quadric surface.

We will consider classification problems with two classes, \(C = {0, 1}\), and two features, \(X\) and \(Y\). Each class will be Bernoulli distributed and the features for each class will be distributed normally. Specifically,

| Classes | \( C \sim \operatorname{Bernoulli}(p) \) |

| Features for Class 0 | \( (X, Y) \mid C = 0 \sim \operatorname{Normal}(\mu_0, \Sigma_0) \) |

| Features for Class 1 | \( (X, Y) \mid C = 1 \sim \operatorname{Normal}(\mu_0, \Sigma_1) \) |

Our goal is to produce two kinds of visualizations: one, of a sample from these distributions, and two, the contours of the class-conditional densities for each feature. We’ll use the mvnfast package to help us with computations on the joint MVN.

Samples

Let’s choose some values for our parameters. We’ll start with the case when the classes occur equally often. For our features, we’ll choose means so that there is some significant overlap between the two classes, and covariance matrices so that the distributions have a nice elliptical shape.

p <- 0.5

mu_0 <- c(0, 2)

sigma_0 <- matrix(c(1, 0.3, 0.3, 1), nrow = 2)

mu_1 <- c(2, 0)

sigma_1 <- matrix(c(1, -0.3, -0.3, 1), nrow = 2)

Now we’ll write a function to create a dataframe containing a sample of classified features from our distribution.

#' Generate normally distributed feature samples for a binary

#' classification problem

#'

#' @param n integer: the size of the sample

#' @param mean_0 vector: the mean vector of the first class

#' @param sigma_0 matrix: the 2x2 covariance matrix of the first class

#' @param mean_1 vector: the mean vector of the second class

#' @param sigma_1 matrix: the 2x2 covariance matrix of the second class

#' @param p_0 double: the prior probability of class 0

make_mvn_sample <- function(n, mu_0, sigma_0, mu_1, sigma_1, p_0) {

n_0 <- rbinom(1, n, p_0)

n_1 <- n - n_0

sample_mvn <- as_tibble(

rbind(mvnfast::rmvn(n_0,

mu = mu_0,

sigma = sigma_0),

mvnfast::rmvn(n_1,

mu = mu_1,

sigma = sigma_1)))

sample_mvn[1:n_0, 3] <- 0

sample_mvn[(n_0 + 1):(n_0 + n_1), 3] <- 1

sample_mvn <- sample_mvn[sample(nrow(sample_mvn)), ]

colnames(sample_mvn) <- c("x", "y", "class")

sample_mvn

}

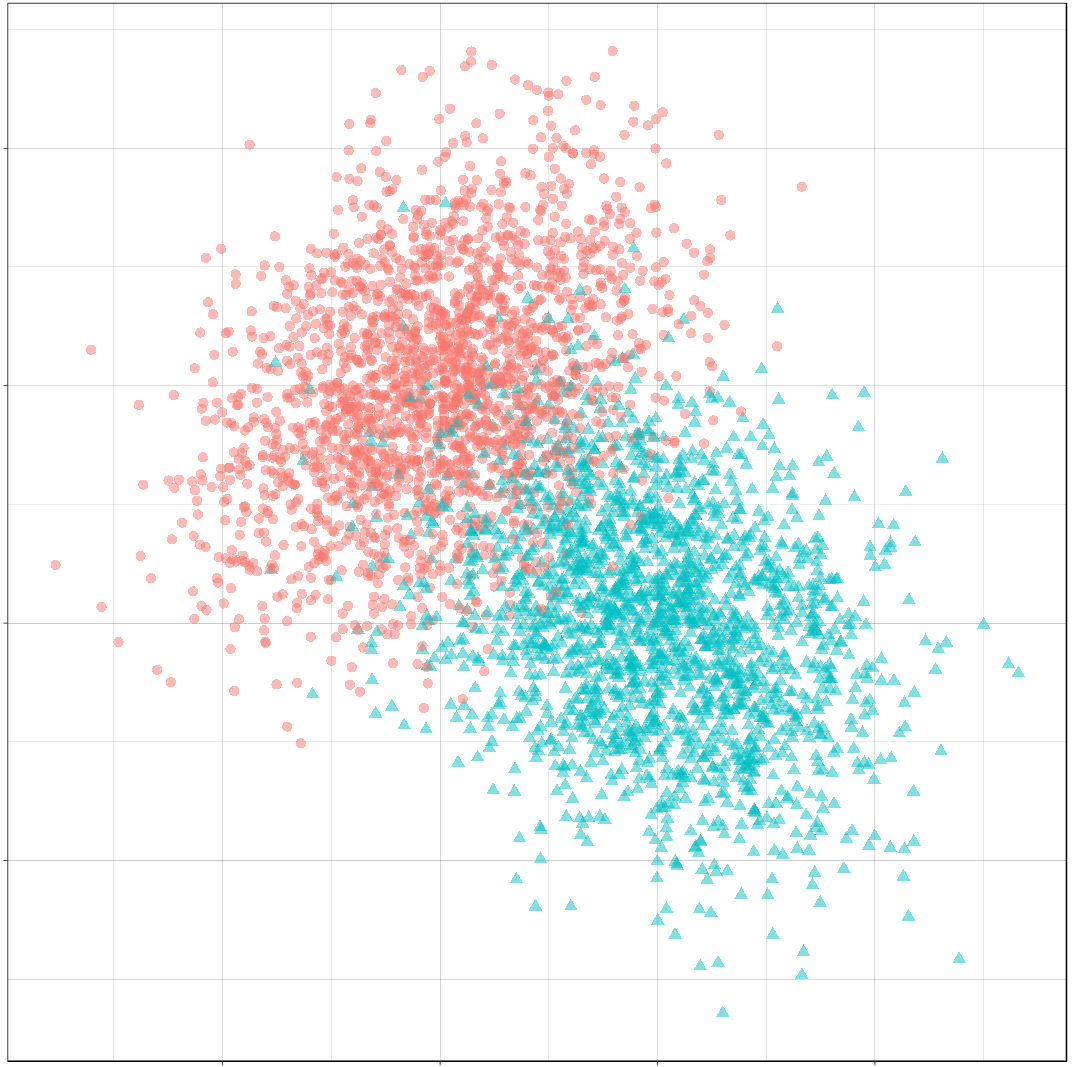

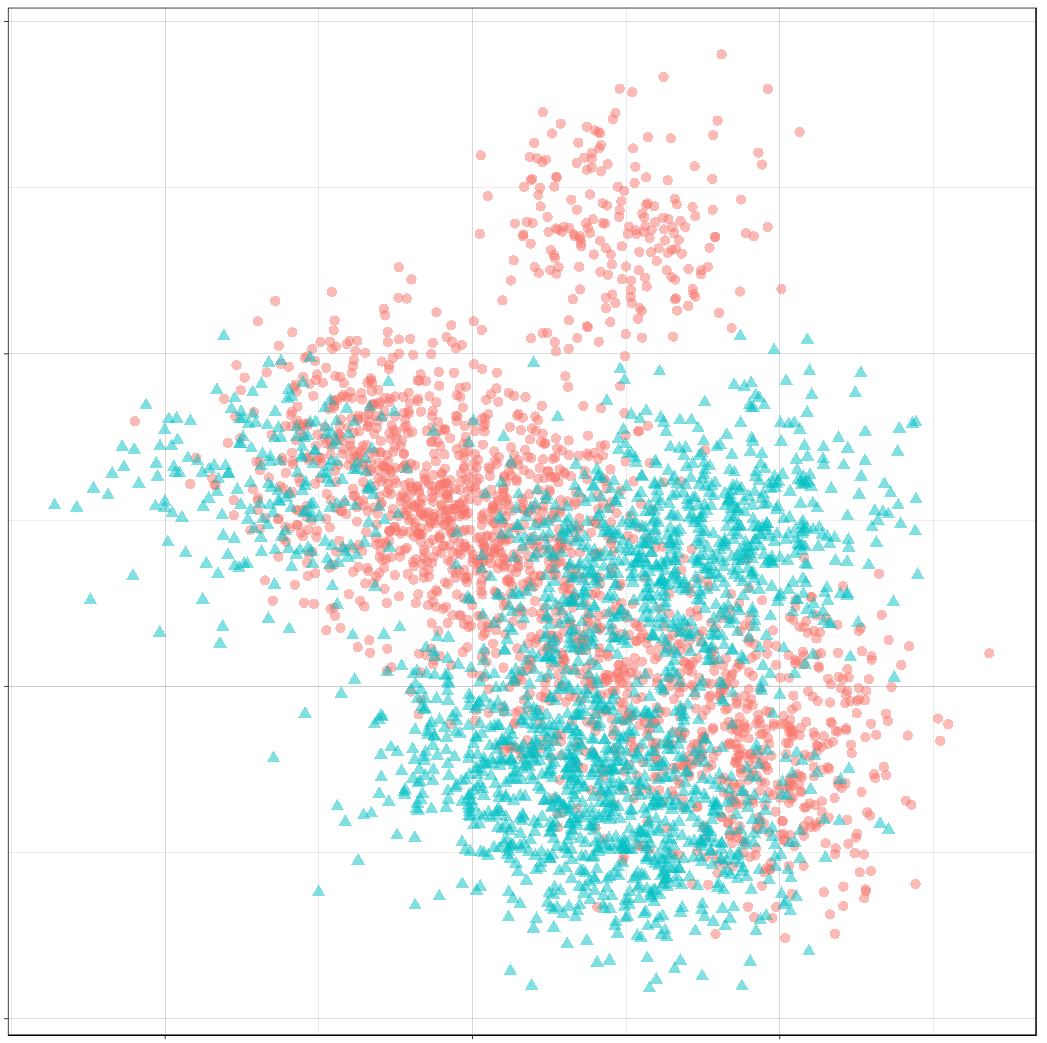

Finally, we’ll create a sample of 4000 points and plot the result.

n <- 4000

set.seed(31415)

sample_mvn <- make_mvn_sample(n,

mu_0, sigma_0,

mu_1, sigma_1,

p)

ggplot() +

gg_sample(sample_mvn) +

coord_fixed()

It should be apparent that because of the overlap in these distributions, any decision rule will necessarily misclassify some observations fairly often.

Classes on the Feature Space

Next, we will produce some contour plots of our feature distributions. Let’s write a function to generate class probabilities at any observation \((x, y)\) in the feature space; we will model the optimal decision boundary as those points where the posterior probabilities of the two classes are equal, that is, where \[ P(X, Y \mid C = 0) P(C = 0) - P(X, Y \mid C = 1) P(C = 1) = 0 \]

#' Make an optimal prediction at a point from two class distributions

#'

#' @param x vector: input

#' @param p_0 double: prior probability of class 0

#' @param dfun_0 function(x): density of features of class 0

#' @param dfun_1 function(x): density of features of class 1

optimal_predict <- function(x, p_0, dfun_0, dfun_1) {

## Prior probability of class 1

p_1 <- 1 - p_0

## Conditional probability of (x, y) given class 0

p_x_0 <- dfun_0(x)

## Conditional probability of (x, y) given class 1

p_x_1 <- dfun_1(x)

## Conditional probability of class 0 given (x, y)

p_0_xy <- p_x_0 * p_0

## Conditional probability of class 1 given (x, y)

p_1_xy <- p_x_1 * p_1

optimal <- p_1_xy - p_0_xy

class <- ifelse(optimal > 0, 1, 0)

result <- c(p_0_xy, p_1_xy, optimal, class)

names(result) <- c("p_0_xy", "p_1_xy", "optimal", "class")

result

}

#' Construct a dataframe with posterior class probabilities and the

#' optimal decision boundary over a grid on the feature space

#'

#' @param mean_0 vector: the mean vector of the first class

#' @param sigma_0 matrix: the 2x2 covariance matrix of the first class

#' @param mean_1 vector: the mean vector of the second class

#' @param sigma_1 matrix: the 2x2 covariance matrix of the second class

#' @param p_0 double: the prior probability of class 0

make_density_mvn <- function(mean_0, sigma_0, mean_1, sigma_1, p_0,

x_min, x_max, y_min, y_max, delta = 0.05) {

x <- seq(x_min, x_max, delta)

y <- seq(y_min, y_max, delta)

density_mvn <- expand.grid(x, y)

names(density_mvn) <- c("x", "y")

dfun_0 <- function(x) mvnfast::dmvn(x, mu_0, sigma_0)

dfun_1 <- function(x) mvnfast::dmvn(x, mu_1, sigma_1)

optimal_mvn <- function(x, y) optimal_predict(c(x, y), p_0, dfun_0, dfun_1)

density_mvn <-as.tibble(

cbind(density_mvn,

t(mapply(optimal_mvn,

density_mvn$x, density_mvn$y))))

density_mvn

}

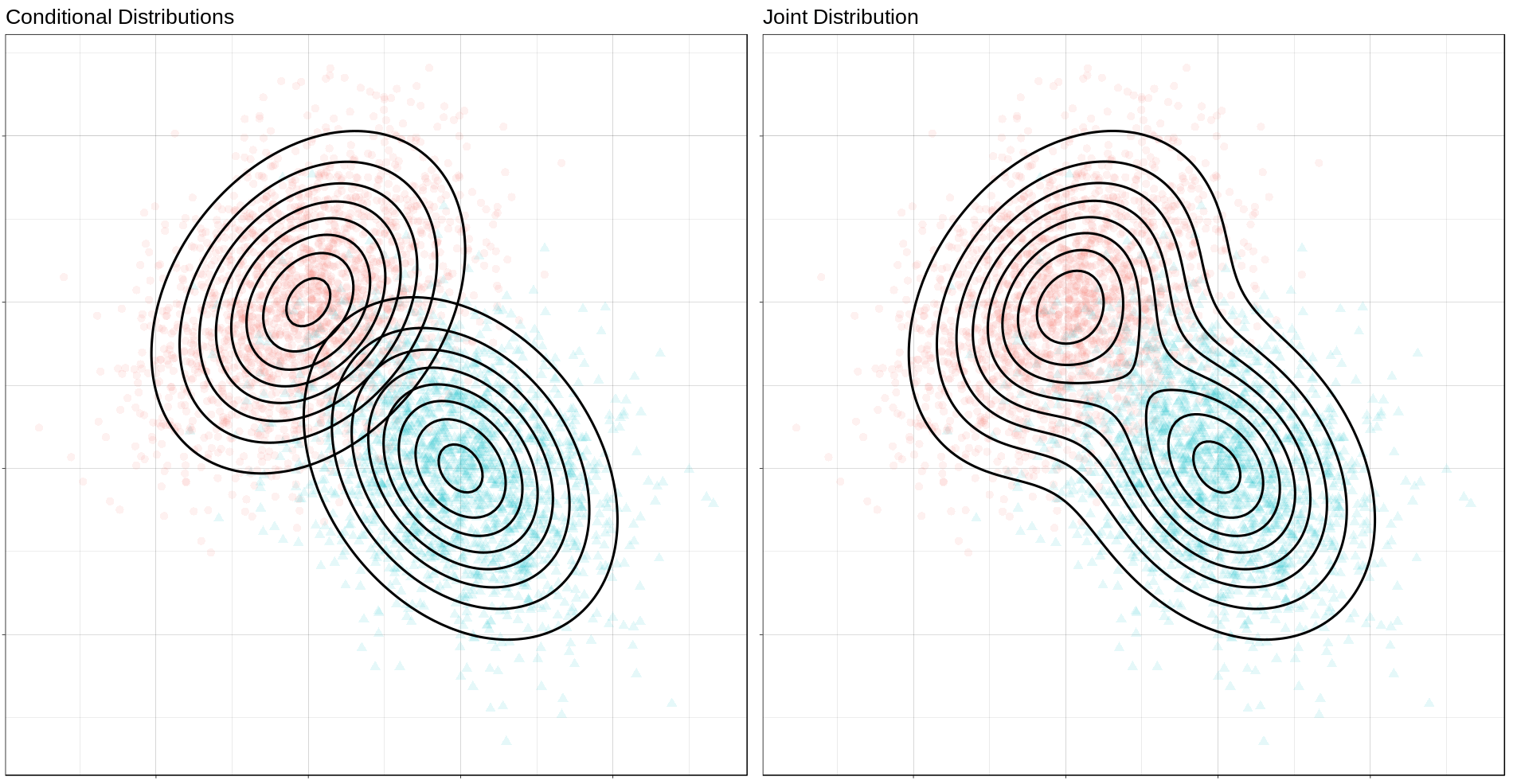

Now we can generate a grid of points and compute posterior class probabilities over that grid. By plotting these probabilities, we can get describe both the conditional feature distributions for each class as well as the joint feature distribution.

density_mvn <- make_density_mvn(mu_0, sigma_0, mu_1, sigma_1, p,

-3, 5, -3, 5)

(ggplot() +

gg_sample(sample_mvn, alpha = 0.1) +

gg_density(density_mvn, z = p_0_xy) +

gg_density(density_mvn, z = p_1_xy) +

ggtitle("Conditional Distributions")) +

(ggplot() +

gg_sample(sample_mvn, alpha = 0.1) +

geom_contour(data = density_mvn,

aes(x = x, y = y, z = p_0_xy + p_1_xy),

size = 1,

color = "black") +

ggtitle("Joint Distribution"))

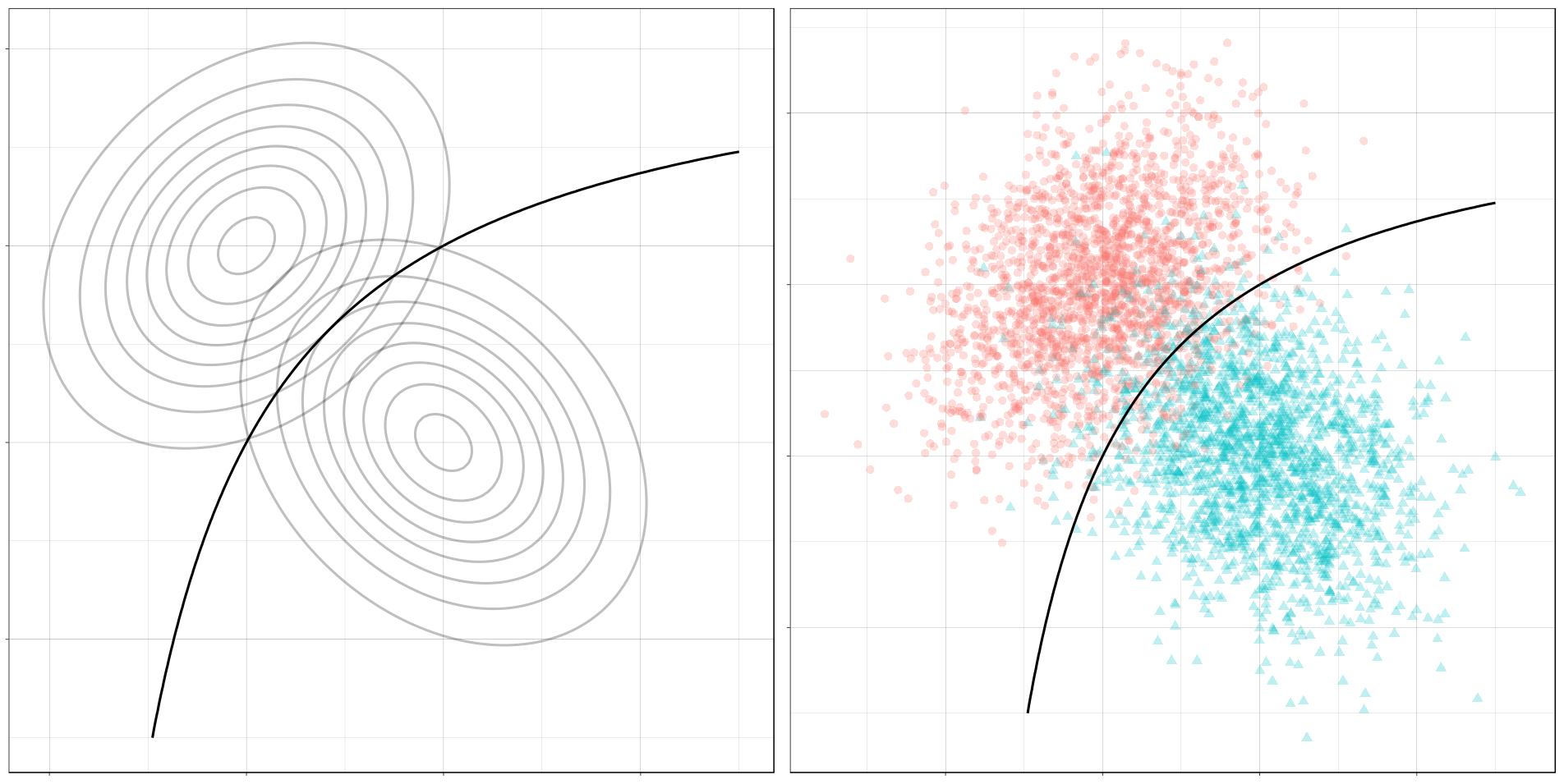

The Optimal Decision Boundary

Now let’s add a plot for the optimal decision boundary for this problem.

(ggplot() +

gg_density(density_mvn, z = p_0_xy,

alpha = 0.25) +

gg_density(density_mvn, z = p_1_xy,

alpha = 0.25) +

gg_optimal(density_mvn)) +

(ggplot() +

gg_sample(sample_mvn, alpha = 0.25) +

gg_optimal(density_mvn)) +

plot_annotation("The Optimal Decision Boundary")

Notice how the boundary runs through the points where the contours of the two conditional distributions intersect. These points of intersection are where the classes have equal posterior probability.

Mixture of Normals

The features of each class might also be modeled as a mixture of normal distributions. This means that each observation in a class will come from one of several normal distributions; in our case, the distributions from a class will be joined by a common hyperparameter, their mean.

In description, at least, the problem is still relatively simple. The possible decision boundaries produced, however, can be quite complex. This is a much more difficult problem than the one we saw before.

For our examples, we will generate the data as follows:

| Classes | \( C \sim Bernoulli(p) \) |

| Mean of Means for Class 0 | \( \nu_0 \sim Normal((0, 1), I) \) |

| Mean of Means for Class 1 | \( \nu_0 \sim Normal((1, 0), I) \) |

| Means of Components for Class 0 | \( \mu_{0, i=1, \ldots, n_0} \sim Normal(\nu_0, I) \) |

| Means of Components for Class 1 | \( \mu_{1, i=1, \ldots, n_1} \sim Normal(\nu_1, I) \) |

| Features for Class 0 | \( (X, Y) \mid C = 0 \sim w_{0, 1} Normal(\mu_{0, 1}, \Sigma_0) + \cdots + w_{0, l_0} Normal(\mu_{0, 0}, \Sigma_0) \) |

| Features for Class 1 | \( (X, Y) \mid C = 1 \sim w_{1, 1} Normal(\mu_{1, 1}, \Sigma_1) + \cdots + w_{1, l_1} Normal(\mu_{1, l_1}, \Sigma_1) \) |

where \(n_0\) is the number of components for class 0, \(w_{0, i}\) are the weights on each component, \(\Sigma_0 = \frac{1}{2 * l_0} I\), and \(I\) is the identity matrix; similarly for class 1.

This is a bit awful, but we are basically doing this:

For each class, define the distribution of the features \((X, Y)\) by

- Choosing the number of components to go in the mixture.

- Choosing a mean for each component by sampling from a normal distribution.

Then, to get a sample: Get an observation by

- Choosing a class, 0 or 1.

- Choosing a component from that class, a normal distribution.

- Sample the observation from that component.

Samples

The computations for the mixture of MVNs are fairly similar to the ones we did before. First let’s define a sampling function. This function just implements the above steps.

#' Generate normally distributed feature samples for a binary

#' classification problem

#'

#' @param n integer: the size of the sample

#' @param nu_0 numeric: the average mean of the components of the first feature

#' @param sigma_0 matrix: covariance of components of the first feature

#' @param n_0 integer: class frequency of first feature in the sample

#' @param w_0 numeric: vector of weights for components of the first feature

#' @param mean_1 numeric: the average mean of the components of the second feature

#' @param sigma_1 matrix: covariance of components of the second feature

#' @param n_1 integer: class frequency of second feature in the sample

#' @param w_1 numeric: vector of weights for components of the second feature

#' @param p_0 double: the prior probability of class 0

make_mix_sample <- function(n,

nu_0, tau_0, n_0, sigma_0, w_0,

nu_1, tau_1, n_1, sigma_1, w_1,

p_0) {

## Number of Components for Each Class

l_0 <- length(w_0)

l_1 <- length(w_1)

## Sample the Component Means

mu_0 <- mvnfast::rmvn(n = l_0,

mu = nu_0, sigma = tau_0)

mu_1 <- mvnfast::rmvn(n = l_1,

mu = nu_1, sigma = tau_1)

## Class Frequency in the Sample

n_0 <- rbinom(1, n, p_0)

n_1 <- n - n_0

## Sample the Features

f_0 <- mvnfast::rmixn(n = n_0,

mu = mu_0, sigma = sigma_0, w = w_0,

retInd = TRUE)

c_0 <- attr(f_0, "index")

f_1 <- mvnfast::rmixn(n = n_1,

mu = mu_1, sigma = sigma_1, w = w_1,

retInd = TRUE)

c_1 <- attr(f_1, "index")

sample_mix <- as.data.frame(rbind(f_0, f_1))

sample_mix[, 3] <- c(c_0, c_1)

## Define Classes

sample_mix[1:n_0, 4] <- 0

sample_mix[(n_0 + 1):(n_0 + n_1), 4] <- 1

sample_mix <- sample_mix[sample(nrow(sample_mix)), ]

names(sample_mix) <- c("x", "y", "component", "class")

## Store Component Means

attr(sample_mix, "mu_0") <- mu_0

attr(sample_mix, "mu_1") <- mu_1

sample_mix

}

Now we’ll define the parameters, construct a sample, and look at the result.

## Bernoulli parameter for class distribution

p = 0.5

## Mean of component means

nu_0 = c(0, 1)

nu_1 = c(1, 0)

## Covariance for component means

tau_0 = matrix(c(1, 0, 0, 1), nrow = 2)

tau_1 = matrix(c(1, 0, 0, 1), nrow = 2)

## Number of components for each class

n_0 <- 10

n_1 <- 10

## Covariance for each class

sigma_0 <- replicate(n_0, matrix(c(1, 0, 0, 1), 2) / n_0 * 2,

simplify = FALSE)

sigma_1 <- replicate(n_1, matrix(c(1, 0, 0, 1), 2) / n_1 * 2,

simplify = FALSE)

## Weights of mixture components

w_0 <- rep(1 / n_0, n_0)

w_1 <- rep(1 / n_1, n_1)

## Sample size

n <- 4000

set.seed(31)

sample_mix <- make_mix_sample(n,

nu_0, tau_0, n_0, sigma_0, w_0,

nu_1, tau_1, n_1, sigma_1, w_1,

p)

## Retrieve the generated component means

mu_0 <- attr(sample_mix, "mu_0")

mu_1 <- attr(sample_mix, "mu_1")

ggplot() +

gg_sample(sample_mix) +

ggtitle("Sample of Mixture Distribution")

ggplot() +

gg_sample(sample_mix) +

gg_mix_label(list(mu_0, mu_1)) +

facet_wrap(vars(class)) +

ggtitle("Feature Components")

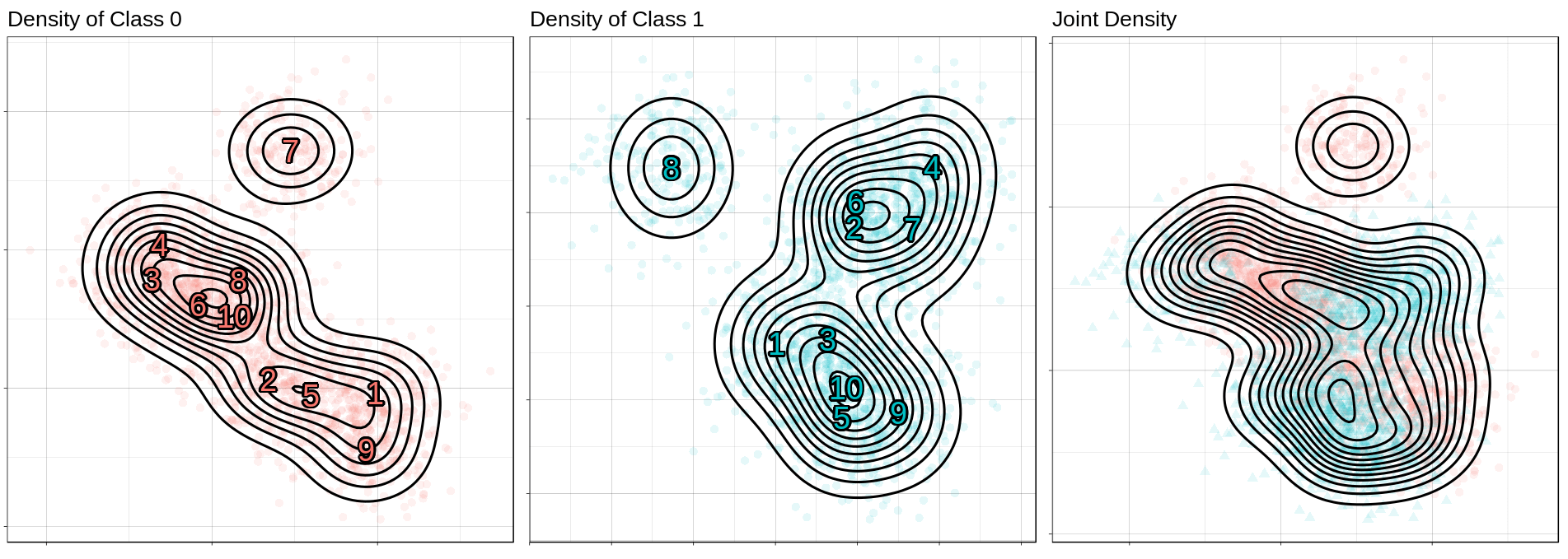

We’ve labelled the component means for each class. (There are 10 components for class 0, and 10 components for class 1.) You can see that around each of these labels is a sample from a normal distribution.

Classes on the Feature Space

Now we’ll compute class probabilities on the feature space.

First define a generating function.

#' Construct a dataframe with posterior class probabilities and the

#' optimal decision boundary over a grid on the feature space

#'

#' @param mean_0 numeric: the average mean of the components of the first feature

#' @param sigma_0 matrix: covariance of components of the first feature

#' @param w_0 numeric: vector of weights for components of the first feature

#' @param mean_1 numeric: the average mean of the components of the second feature

#' @param sigma_1 matrix: covariance of components of the second feature

#' @param w_1 numeric: vector of weights for components of the second feature

#' @param p_0 double: the prior probability of class 0

make_density_mix <- function(mean_0, sigma_0, w_0,

mean_1, sigma_1, w_1, p_0,

x_min, x_max, y_min, y_max, delta = 0.05) {

x <- seq(x_min, x_max, delta)

y <- seq(y_min, y_max, delta)

density_mix <- expand.grid(x, y)

names(density_mix) <- c("x", "y")

dfun_0 <- function(x) mvnfast::dmixn(matrix(x, nrow = 1),

mu = mean_0,

sigma = sigma_0,

w = w_0)

dfun_1 <- function(x) mvnfast::dmixn(matrix(x, nrow = 1),

mu = mean_1,

sigma = sigma_1,

w = w_1)

optimal_mix <- function(x, y) optimal_predict(c(x, y), p_0, dfun_0, dfun_1)

density_mix <-as.tibble(

cbind(density_mix,

t(mapply(optimal_mix,

density_mix$x, density_mix$y))))

density_mix

}

And now compute the grid and plot.

density_mix <- make_density_mix(mu_0, sigma_0, w_0, mu_1, sigma_1, w_1, p,

-3, 5, -3, 5)

(ggplot() +

gg_sample(sample_mix, classes = 0,

alpha = 0.1) +

gg_density(density_mix, z = p_0_xy) +

gg_mix_label(list(mu_0, mu_1), classes = 0) +

ggtitle("Density of Class 0")) +

(ggplot() +

gg_sample(sample_mix, classes = 1,

alpha = 0.1) +

gg_density(density_mix, z = p_1_xy) +

gg_mix_label(list(mu_0, mu_1), classes = 1) +

ggtitle("Density of Class 1")) +

(ggplot() +

gg_sample(sample_mix,

alpha = 0.1) +

geom_contour(data = density_mix,

aes(x = x, y = y, z = p_0_xy + p_1_xy),

color = "black",

size = 1) +

ggtitle("Joint Density"))

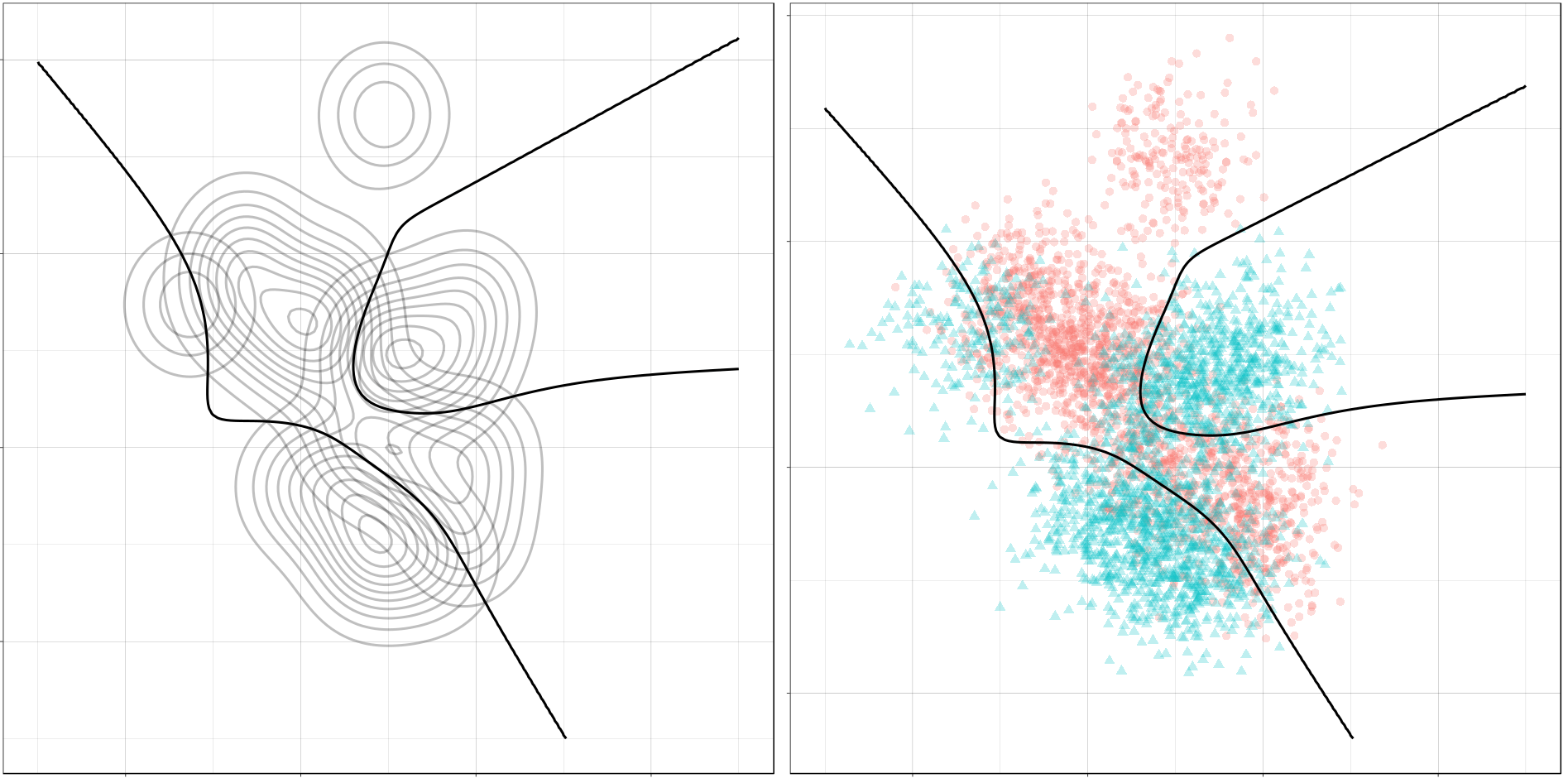

The Optimal Decision Boundary

And here is the optimal decision boundary for this problem. Notice how again the boundary runs through points of intersection in the two conditional distributions, and how it separates the classes of observations in the sample.

(ggplot() +

gg_density(density_mix, z = p_0_xy,

alpha = 0.25) +

gg_density(density_mix, z = p_1_xy,

alpha = 0.25) +

gg_optimal(density_mix)) +

(ggplot() +

gg_sample(sample_mix, alpha = 0.25) +

gg_optimal(density_mix))

Class Imbalance

So far, we’ve only seen the case where the two classes occur about equally often. If one class has a lower probability of occuring (say class 1), then the optimal decision boundary must move toward the class 1 distribution in order to equalize the probabilities on either side. This should help illustrate why it’s important to consider class imbalance whenever you’re working on a classification problem. A large imbalance can change your decisions drastically.

To see this change, we will use the gganimate package to produce an animation showing how the optimal boundary changes as the Bernoulli parameter (the frequency of class 0) changes from 0.1 to 0.9.

Normally Distributed Features

## Evaluate mu_0, sigma_0, etc. again, if needed.

density_p0 <-

map_dfr(seq(0.1, 0.9, 0.005),

function(p_0)

make_density_mvn(mu_0, sigma_0, mu_1, sigma_1,

p_0, -3, 5, -3, 5) %>%

mutate(p_0 = p_0))

anim <- ggplot() +

geom_contour(data = density_p0,

aes(x = x, y = y, z = p_0_xy + p_1_xy),

color = "black",

size = 1,

alpha = 0.25) +

gg_optimal(density_p0) +

transition_manual(p_0) +

ggtitle("Proportion of Class 0: {current_frame}")

anim <- animate(anim, renderer = gifski_renderer(),

width = 800, height = 800)

anim

Mixture of Normals

density_mix_p0 <-

map_dfr(seq(0.1, 0.9, 0.005),

function(p_0)

make_density_mix(mu_0, sigma_0, w_0, mu_1, sigma_1, w_1,

p_0, -3, 5, -3, 5) %>%

mutate(p_0 = p_0))

anim <- ggplot() +

geom_contour(data = density_mix_p0,

aes(x = x, y = y, z = p_0_xy + p_1_xy),

color = "black",

size = 1,

alpha = 0.25) +

gg_optimal(density_mix_p0) +

transition_manual(p_0) +

ggtitle("Proportion of Class 0: {current_frame}")

anim <- animate(anim, renderer = gifski_renderer(),

width = 800, height = 800)

anim

Conclusion

In this post, we reviewed decision boundaries, a way of visualizing classification rules. In particular, we looked at optimal decision boundaries, which represent the best solution possible to a problem given certain costs for misclassification. The rule we used in this post was the MAP estimate, which minimizes zero-one loss, where all misclassifications are equally likely.

In future posts, we’ll look other kinds of loss functions and how that can affect the decision rule, and also at the boundaries produced by a number of statistical learning models.

Hope you enjoyed it!